AI Model Global Distribution Infrastructure

Global distribution and inference acceleration for LLMs, image generation, speech recognition and other AI applications - faster, more stable, more secure

Global Nodes

Edge inference, nearby service

Latency

Millisecond response

Flagship Model Support

Global edge deployment and accelerated inference for mainstream AI models

LLM Inference

Optimized inference for GPT, Claude, Llama and other LLMs

Image Generation

Edge deployment for Stable Diffusion, DALL-E, Midjourney

Speech Recognition

Low-latency inference for Whisper and TTS models

Multimodal Models

Global distribution for GPT-4V, Gemini multimodal models

Infrastructure Built for AI

Explore how we help enterprises accelerate global AI application deployment and distribution

Global Smart Routing

3000+ edge nodes with automatic optimal path selection for nearby inference, covering 200+ countries for ultra-fast AI model distribution

Cold Start Optimization

Model preloading and caching technology, first inference latency < 100ms, dramatically improving user experience

OpenAI

@OpenAI

GPT-4o 现已支持实时语音对话和视觉理解。

感谢 Yewsafe AI Gateway 提供的全球加速,API 延迟降低 60%。

Anthropic

@AnthropicAI

Claude 3.5 Sonnet 在代码生成和推理任务上取得突破性进展。

通过边缘节点优化,亚太区用户响应速度提升 3 倍。

Google DeepMind

@GoogleDeepMind

Gemini Pro 现已向全球开发者开放 API 接入。

智能路由让每一次推理请求都选择最优节点。

Cost Optimization

Smart scheduling and cache optimization with pay-as-you-go pricing, reducing inference costs by up to 60%

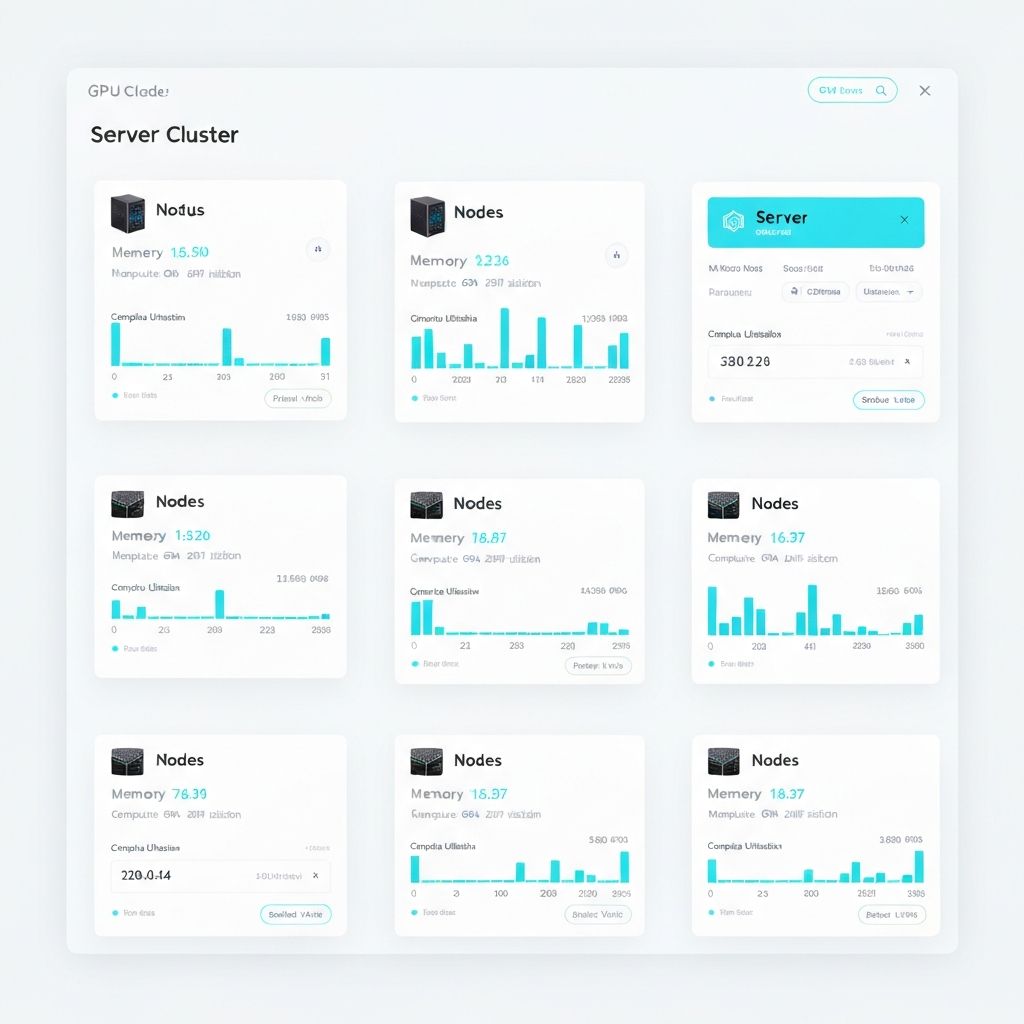

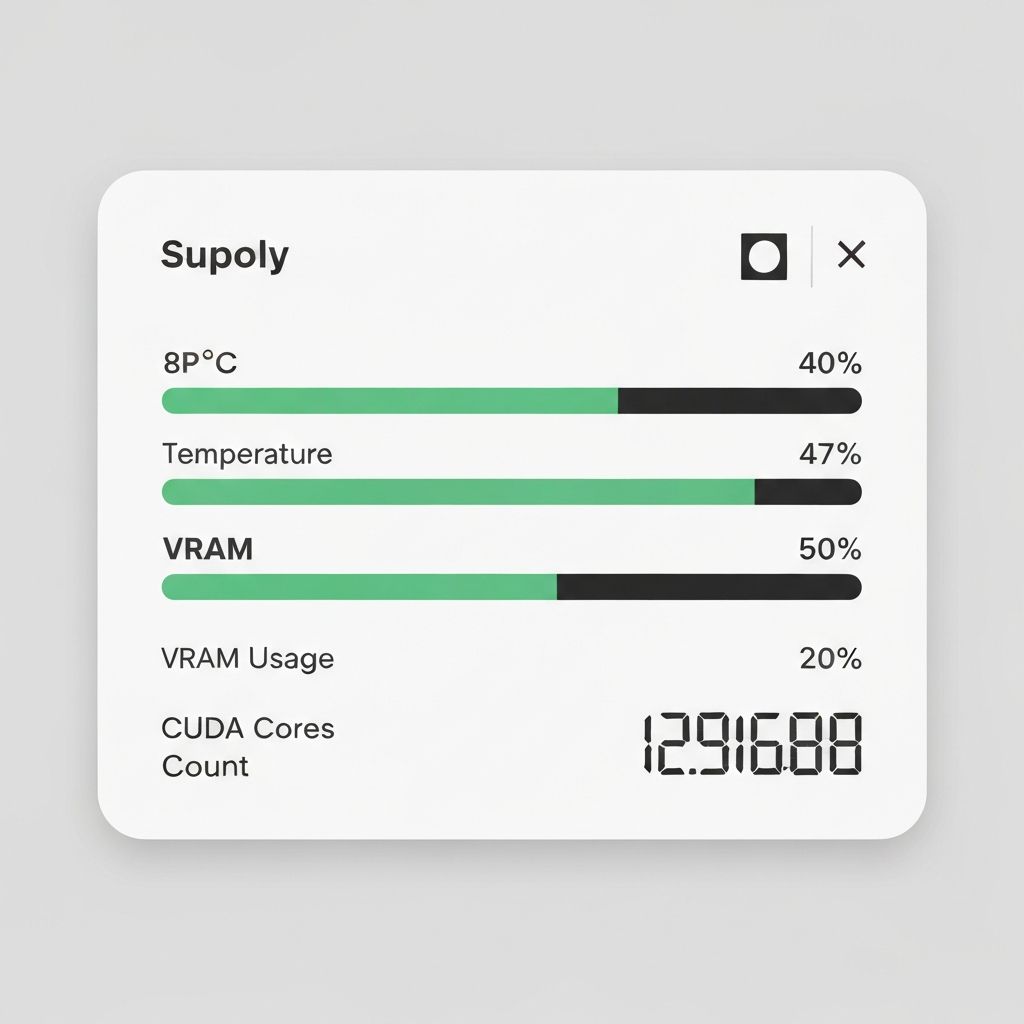

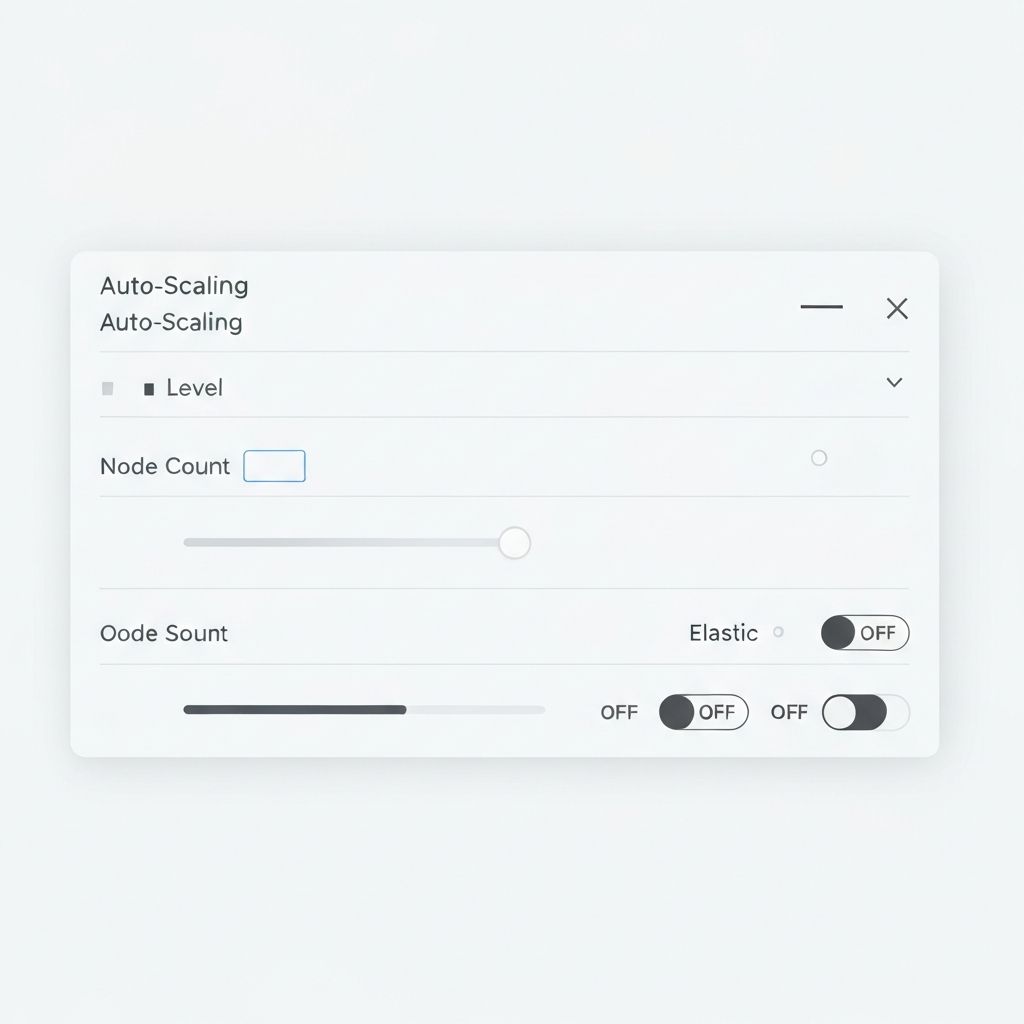

GPU Edge Clusters

Globally distributed GPU pools with auto-scaling for traffic spikes, ensuring stable inference services

API Gateway

Unified management with rate limits, quotas, monitoring - easily manage multi-model API calls

Four Simple Steps to Global Deployment

Connect API

One line integration, OpenAI API compatible

Smart Routing

Auto-select optimal edge node for inference

Accelerated Inference

GPU edge clusters with model caching

Return Results

Low-latency response with end-to-end encryption

Trusted by Industry Leaders Worldwide

Leading enterprises choose our AI distribution acceleration service to deliver millisecond-level AI inference responses to their global users, significantly improving user experience and business efficiency.

Frequently Asked Questions

Common questions about AI Gateway

Our technical team is ready to help with quick responses.

Start Your AI Global Journey

Free trial, experience enterprise-grade AI distribution acceleration